A/B Testing: How to Optimize Based on Your Data?

This post is also available in:

PT

Hello everyone!

I’m Ettore, 28 years old Italian, living in Spain since the beginning of my work experience. My work career started in an Emailing company, where I discovered the affiliate world. Since then, I’ve been obsessed with buying media online and I’ve been a media buyer for different networks (both CPA networks and traffic platforms) and as an individual affiliate.

Read Ettore’s previous article about Psychology and Motivating Users

In this post, we will analyze how to properly conduct an A/B test and, more importantly, how to implement the conclusions of our tests in our advertising campaigns.

Principles of correct AB testing

We can consider A/B testing as a controlled experiment that allows us to obtain information in a data-driven way, in order to increase the conversion rate of a particular marketing activity like a landing page, an advertising campaign, an ad-spot on our website, etc.

But how?

When conducting an A/B test we develop and launch two versions of the same element and measure which one works better, in order to make data-driven actions on the structure of our campaign (or landing page, or website, etc.).

Below, we will analyze how to properly use the A/B testing on the different components of an advertising campaign, in order to make it successful.

A/B testing for images

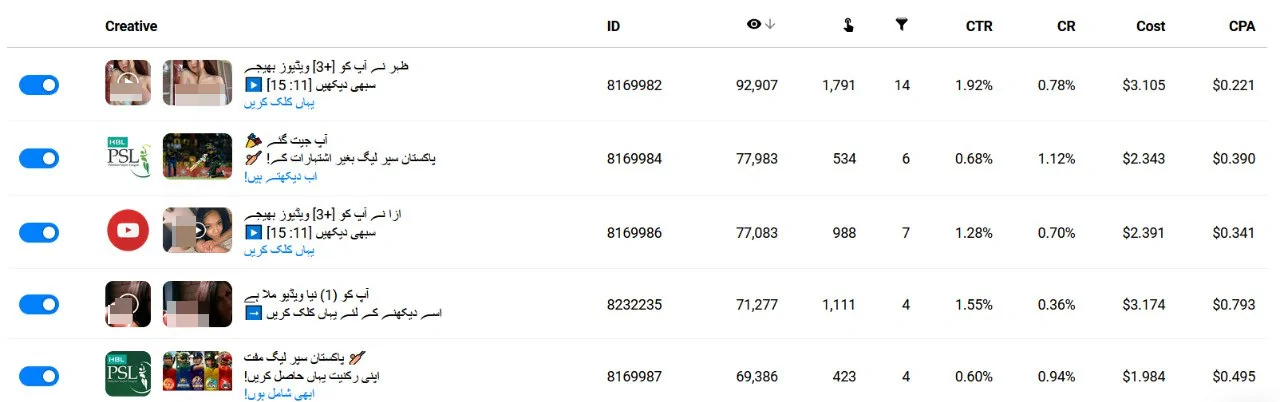

A/B testing on images is used to find patterns among the components of the pictures we used that proved to work best. In this phase, we will first test the different angles we can come up with for our campaign. Let’s take into consideration the picture below as an example:

This set of creatives was used for a mobile content campaign on PK (Pakistan), and the landing page was a streaming service to watch the PSL (Pakistan Super League).

As you can notice the creatives used in this campaign are very different, and this is because in this phase my A/B test was conducted on the angles: a very conversion-oriented one, which said something like “Watch PSL without advertisement”; and a more aggressive and clickbaity one with a girl saying something like “I sent you a video”.

As expected, the conversion-oriented had the best conversion rate, but a poor CTR, while the clickbaity one was clicking incredibly better, and still converting at a decent rate.

In this case, I decided to create two different campaigns with two different sets of creatives, one only with “clickbaity” creatives, the other only with “conversion-oriented creatives”. This was made to confirm the tendency of the previous test’s results, and to find a real winner between the two approaches. Long story short, the clickbaity one won.

We can say this is sort of an extreme case, but we might want to A/B test angles in different ways. For example, we can approach a dating offer for straight males with various angles:

– “Teen-looking” vs Mature-looking

And then going in-depth:

– Closeups on specific body parts vs. photo with the face of a girl only

– Selfies vs. casual photos

– Blonde vs. brunette, etc.

The general idea is that once we find a winning angle, we keep A/B testing the other visual components of our campaign.

We can always dig deeper with our tests, but in many cases, in order to make our test the most reliable possible, it is best to create a new campaign and test the new ideas separately to confirm the trend.

A/B testing for textual components

Now, let’s have a look at the following set of creatives:

In this phase, we already identified the “winning angle” and a couple of best-performing images and icons, and we’re now A/B/C testing some texts.

It’s generally best to start a campaign with at least 4-6 creatives and add on the way more variations of the creatives that generated the best results.

Once we’ve conducted the first test with our first set of creatives and identified the winners, we will follow A/B testing the rest of the variables of our campaign.

When speaking about the textual parts you can even stress this more and play with titles and descriptions (and/or brand names depending on the network), isolating just one of the two components like in the example below:

Here I have been A/B testing only the description of this push campaign.

A/B testing for targeting variables

While it is very obvious to test in a separate campaign desktop and mobile, it might not be that obvious for other targeting components.

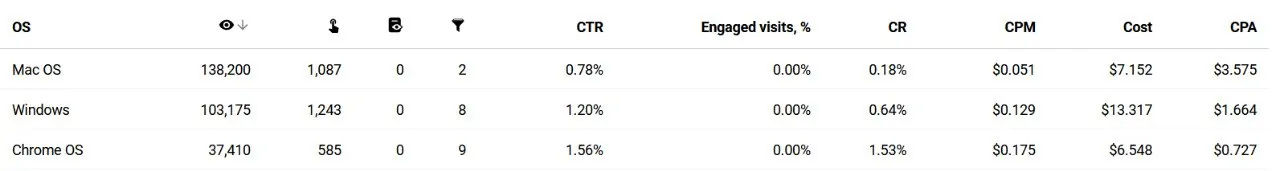

Let’s now have a look at this desktop campaign:

Having a look at the performances of this RON* campaign, we can notice immediately that our eCPAs per OS differ greatly. It is a case where we might need to A/B/C test Mac, Windows, and Chrome OS separately (if the trend is confirmed later on with a higher spend).

This is a good practice mainly because it will allow us to optimize all the other variables of our campaign separately, and ultimately reach lower eCPAs on the global performances of the specific campaign.

*I refer to a RON campaign meaning a campaign that has been running for the first time and doesn’t have a whitelist nor a blacklist yet.

The same approach can be used with all the other targeting variables of our campaigns, like for example, user activity considering the data of the campaign below:

In this case, we could keep medium and low together and split test in a separate campaign the high level (since their performances are similar), or we could A/B/C test all the three user activities separately.

Wrapping up

A/B testing surely is a powerful weapon when it comes to conversion optimization.

One thing to keep in mind is not to limit the number of tests. We can almost always improve a result even if we think otherwise.

Finally, always analyze the data and the results obtained. They are the key to improving our campaigns’ results.

Disclaimer. The views expressed in this article are those of the author and do not necessarily reflect the official position of PropellerAds.

Ready to discuss A/B testing? Join our Telegram chat and speak to REAL affiliates.