Creative Fatigue in the Age of AI: Why More Variations ≠ Better Results

More Creatives, Better Results? Not Quite…

There was a time when launching more creatives almost guaranteed better performance. You tested a few angles, and each iteration gave you a chance to find something that clicked.

Boom, you found a winner, and from then on, scaling felt relatively straightforward.

These days, with AI, you can spin up hundreds of creatives almost instantly. Swap a headline, tweak a background, change a face or a color… and you’ve got another nice and shiny variation ready to go. Naturally, it’s easy to assume:

If I have more creatives, I can find more winners.

If you’ve tested this in practice, you’ve probably noticed something strange: more creatives stop helping at some point (and can even start dragging your performance down). Results get unstable, CPAs creep up, and nothing really sticks with the users anymore.

Question is: Where does it all start to fall apart?

When “More” Starts Working Against You

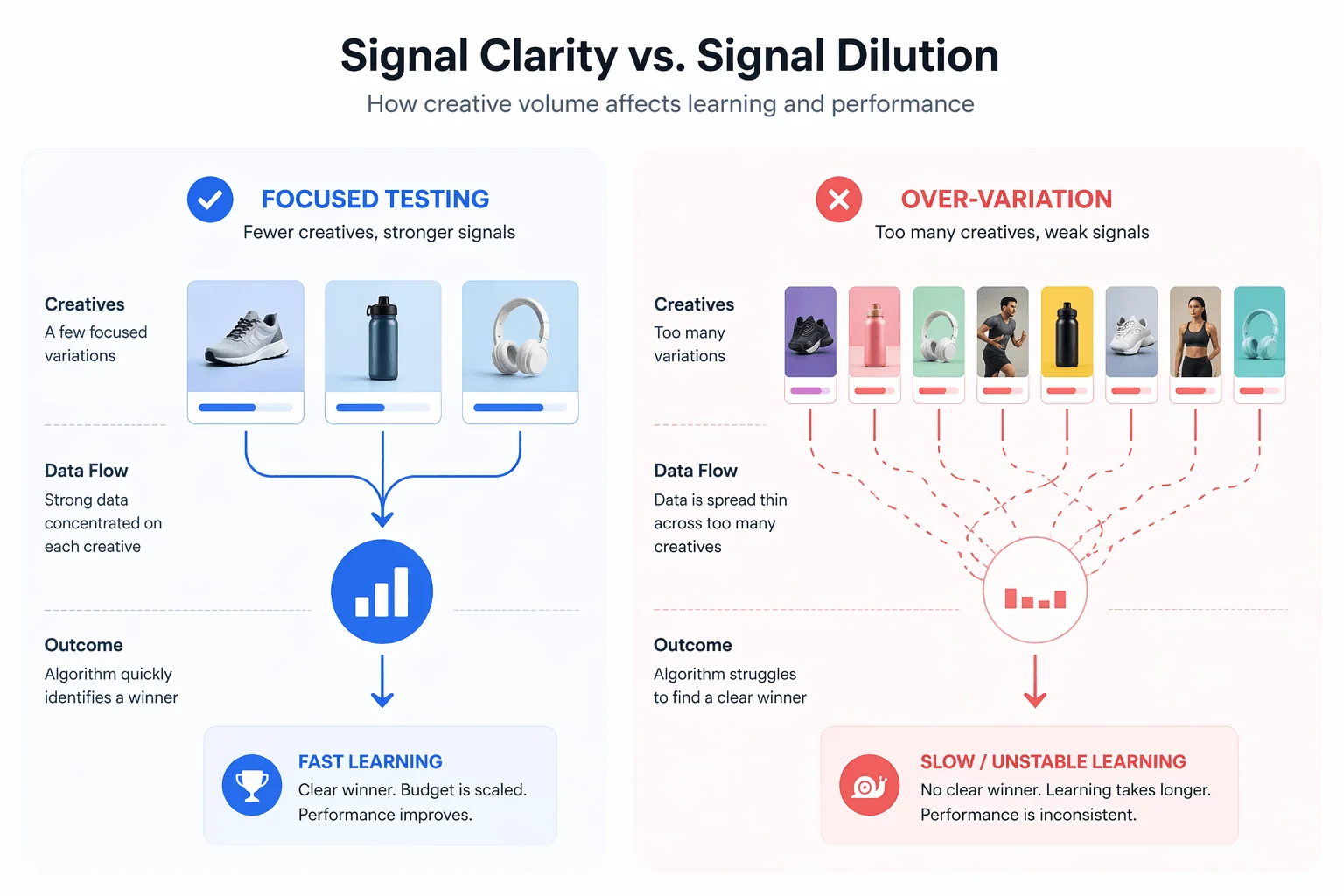

The issue isn’t AI itself, but how its volume interferes with learning. Advertising systems optimize for repeatable (and consistent) signals rather than variety.

When too many creatives are launched at once:

- Each one gets limited delivery

- Data fragments across variations

- No single pattern gathers enough weight

Instead of reinforcing what works, the system keeps restarting its assumptions. This is often where campaigns get stuck in extended learning phases or show unstable performance – not because nothing works, but because nothing gets enough data to prove it works.

Of course there’s a structural side to this too, so think of it this way:

Imagine you launch a large batch of creatives at once. The platform starts testing them, but each one receives only a small share of traffic. Early signals come in, but they’re weak and inconsistent. Instead of doubling down on a clear winner, the system keeps spreading budget across multiple directions. What you end up with is shallow testing as nothing gets enough exposure to prove itself, and optimization never fully kicks in.

This dynamic doesn’t only affect how algorithms learn but shows up in how people react, too. Imagine a user seeing your offer a few times, but each time it looks slightly different. New visual, new wording… basically the same idea dressed up in a different way. Instead of building familiarity, it creates a small moment of doubt. The ad doesn’t feel consistent enough to be recognized, but not different enough to stand out either.

There’s a concept in behavioral research called choice overload. A study by Sheena Iyengar and Mark Lepper (2000) portrayed that when people are exposed to many options, they’re less likely to make a decision at all. It’s not exactly the same situation, but the pattern is familiar. When the message keeps shifting, it never quite “sticks.” And if it doesn’t stick, it’s easy to scroll past without thinking twice.

People Are Getting Tired of “AI Ads” (Even If They Don’t Realize It)

There’s also a shift happening on the user side which is very easy to underestimate. People won’t always say, “this is AI,” but they pick up on patterns faster than we think. You’ve seen it: perfectly polished visuals, familiar hooks, compositions that feel almost too clean. Nothing is technically wrong with them. In fact, they often look better than most manually produced ads. The problem is that they start blending together – one ad looks like the next (just with minor tweaks).

When everything feels similar, attention drops.

This ties directly into what’s long been known as banner blindness. Research conducted by the Nielsen Norman Group (2018) shows that users learn to ignore anything that looks like an ad, regardless of how relevant it actually is.

“Users often ignore anything that looks like an advertisement, even if it is relevant.”

What’s changed is the format, not the behavior. It’s no longer just banners being filtered out but rather the entire creative patterns. When AI-generated ads follow similar structures, the brain groups them together almost instantly. At that point, you’re not competing on quality anymore but trying to break through something much deeper than that: familiarity which gets ignored.

Even the Algorithms Are Feeling It

At the same time, the platforms themselves are moving in a new direction.

Meta Platforms is already moving toward fully automated ad systems, where AI takes care of almost everything: generating creatives, choosing audiences, adjusting delivery in real time. In theory, this removes a lot of manual work: you upload an asset, set your goal, define a budget, and the system handles everything that’s left.

Apart from making things more efficient, this process introduces a side effect that doesn’t get talked about much.

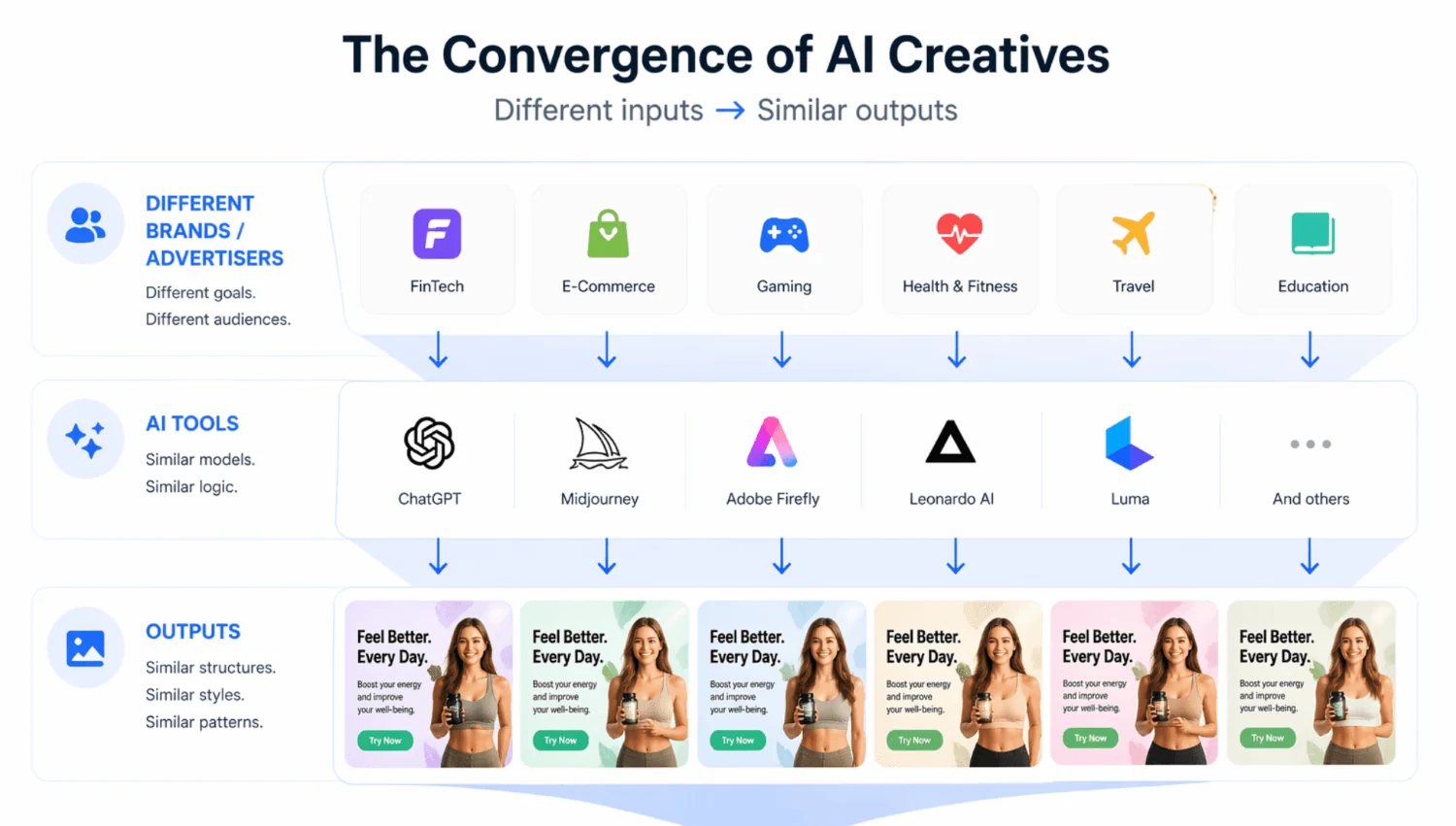

When everyone is using similar tools to generate creatives, the output starts to look… similar. The advertisers and the brands are different, but the logic and the structure is the same. You still have variations, but they often feel like surface changes (i.e. new colors, new faces, slightly different wording) which make little to no difference to the overall picture.

From the algorithm’s side, this creates a kind of “blur.” Too many similar signals, not enough distinction between what actually works and what doesn’t. Instead of quickly locking onto a strong pattern, the system has to work harder to separate one from another. That slows things down and makes performance less predictable.

From the user’s side, it’s even more straightforward. Ads start blending together. Nothing looks wrong, but nothing really stands out either. You scroll, you see it, and you move on.

According to research conducted by the Ehrenberg-Bass Institute, distinctive brand assets (such as visuals, colors, and consistent creative style) play a key role in recognition and memory, which directly impacts how effective an ad is.

When those distinctive elements are missing or constantly changing, the ad becomes harder to recognize, and much easier to ignore.

So What Actually Works Now?

Expert View by Dennis Stets, 888STARZ Partners representative.

Where does performance stay stable, and where does it start to fall apart?

Instead of guessing, it makes more sense to look at what holds up in real campaigns.

To get a clearer picture, we asked our team. They work with campaigns every day, see how creatives behave at scale, and know what actually makes a difference once you move past theory.

When advertisers use automated creative generation tools, how does performance usually change after scaling the number of variations?

We usually see a very typical pattern. At the testing stage, CTR goes up quickly – but it doesn’t last long. The initial spike is often followed by a decline.

The core issue with blindly scaling creatives through AI is what we’d call “synthetic diversity.” On paper, it looks impressive: you get endless testing opportunities after generating hundreds of creatives. In reality, though, it’s often the same idea wearing different outfits. New background here, different button color there, maybe a slightly different face or headline. If we dig deeper though, nothing really changes…

Users notice this faster than advertisers think. After a while, the creatives start feeling weirdly familiar, like seeing the same ad over and over again with a fresh coat of paint. Technically, yes, they’re different. Practically? Not different enough to create a new reaction.

As a result, algorithms start picking up on declining engagement and repetitive user behavior. Relevance drops, traffic becomes more expensive, and while volume increases, it doesn’t translate into quality leads.

Do you observe faster creative fatigue in campaigns that rely heavily on AI-generated assets?

Yes, this is definitely something we see.

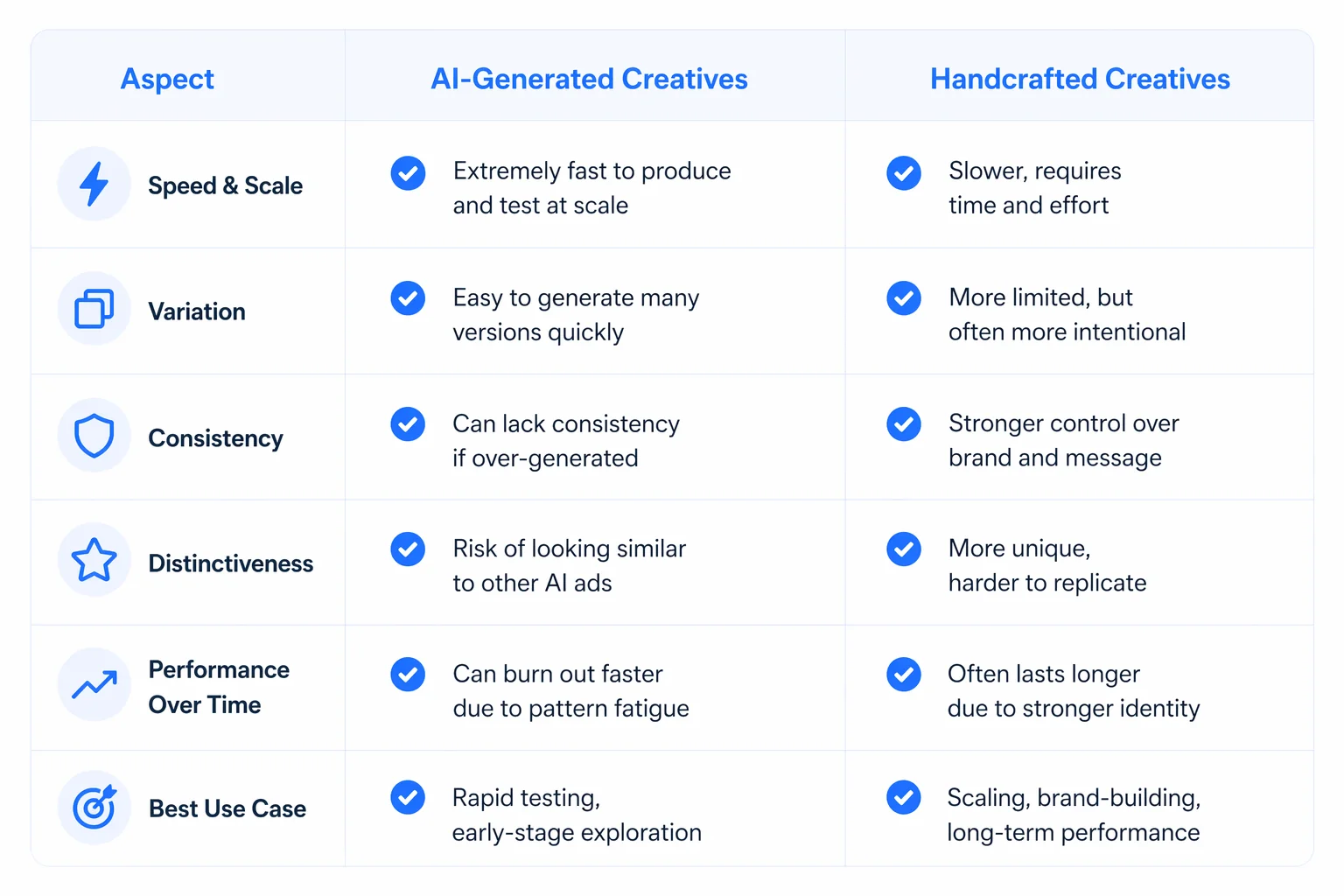

When AI-generated creatives are used at scale without a clear creative strategy, they tend to burn out faster. The reason is quite simple – generative models naturally “average things out.” As a result, the market gets flooded with visually similar, overly polished creatives.

Users start developing a kind of “banner blindness” toward this style. These creatives often lack a strong emotional trigger or a sense of uniqueness (something that actually stops the scroll and drives conversion).

How do PropellerAds creatives differ in terms of stability and signal clarity compared to AI-heavy campaigns?

It really comes down to format. PropellerAds works mainly with Push and Pop traffic, where users have just a fraction of a second to react. In this kind of environment, complex visuals don’t win, but clarity does.

Campaigns overloaded with AI-generated graphics often try to do too much visually, which ends up diluting the core message.

What tends to perform better here is:

- Short, clear text

- A straightforward offer

- A strong call-to-action

- Simple visual elements

This creates a much cleaner signal for the algorithm and leads to more stable ROI compared to visually noisy creatives.

That said, from a macro perspective the question isn’t entirely black and white. AI itself is capable of producing highly distinctive and visually strong content. For example, in other industries like gaming, some environments (such as in Clair Obscur: Expedition 33) have been generated using AI and still feel unique.

So in theory, AI could produce much more original creatives. The issue is that in advertising, it’s often used for speed and scale rather than for building something truly distinctive.

In your experience, what is the optimal balance between creative volume and performance stability?

The key to success is prioritizing quality over quantity. Instead of scaling hundreds of variations of an average creative, it’s far more effective to work with a smaller set of strong concepts – usually between 5 and 10. Each should be built around a specific psychological trigger (i.e. FOMO, exclusivity, or local insights).

Once you’ve found something that works, automation becomes useful when:

- Adapting formats

- Localizing content

- Resizing assets

Long-term stability comes from a strong creative foundation, not from mass-producing variations.

What are the most common mistakes advertisers make when scaling creatives with automation?

Actually, there are a few recurring ones…

- Letting AI define the strategy – Trying to delegate not just production, but the creative thinking itself. The result may look fine visually, but lacks real marketing logic.

- Ignoring local context – AI often generates generic, global-looking creatives. Without adapting them to a specific GEO (i.e. payment methods, cultural cues, and trust signals) conversion drops.

- Prioritizing volume over quality – Focusing on the number of creatives as a KPI leads to weaker performance and lower user trust.

- Repeating the same message – Even with visual variation, the core message often stays the same. Users quickly lose interest, and fatigue sets in faster.

All in all, AI is a powerful tool for speeding up production and testing. However, without a clear strategy, it creates the illusion of scale, weakens signal quality, and accelerates creative fatigue. Right now, the ones who win are not the ones generating the most, but the ones who control quality, focus on ideas rather than just variations, and scale with intention, rather than volume.

Why Simpler Environments Sometimes Perform Better

Once you step away from creative volume, another pattern becomes easier to notice:

Not all environments demand the same from your creatives.

In feed-based platforms, your ad is just one more post in a constant stream. Think about how people actually use these apps… Scrolling on autopilot, switching attention every few seconds. Your creative has to interrupt that flow, explain the value, and spark interest almost instantly. That’s a lot to ask from a single impression.

It’s a bit like trying to start a conversation in a crowded room. Even if you say something interesting, there is a great chance that it will get lost in the room’s noise.

Now let’s compare it to more direct formats.

Push notifications or popunders feel more like a one-on-one moment – that’s why there’s less competition in that exact second. The user isn’t juggling ten different inputs but rather reacting to one. In other words, a simple, direct message can perform better than a highly polished visual.

You see this in practice quite often. For example:

- A highly designed, AI-generated creative might perform well in social feeds at first, but fatigue quickly as users scroll past similar-looking ads.

- The same offer, presented as a short, direct push notification (“Limited offer – available ONLY today”), can keep converting longer simply because it doesn’t rely on standing out visually every time.

Or take pop traffic:

- Instead of competing inside a feed, the user lands directly on the page. There’re no distractions, and therefore no comparison at that moment. The creative doesn’t need to “win attention” as it already has it.

Verdict: Volume ≠ Impact

All in all, it is clear that AI made creative production effortless. What used to take days now takes minutes. Testing is faster, scaling feels easier, and there’s always another variation ready to go. Performance, though, didn’t get simpler.

More creatives don’t automatically improve results. In many cases, they just add noise where signals get diluted, patterns are harder to read, and real winners take longer to surface. At the same time, users see impressions rather than “variations”. When those impressions feel repetitive or inconsistent, attention drops before anything has a chance to grab the user’s attention.

AI scales production but it doesn’t guarantee impact.

The campaigns that actually hold up tend to keep things tight – fewer ideas, but ones that are clear and consistent enough to stick. Because if everything is changing all the time, what exactly is the user supposed to remember?

Join our Telegram for more insights and share your ideas with fellow-affiliates!