The Ransomware Economy in 2026: How Cybercrime Exploits Gaps in the Ad Supply Chain

Ransomware used to be a problem security researchers worried about. That changed around 2017. By 2025, it has evolved into something far more structured: a global industry running on franchise economics, complete with professional support tiers, SLAs, and affiliate programs. And here’s the part the advertising industry has been slow to reckon with: a meaningful share of its distribution flows through the least-monitored corners of the programmatic ecosystem — open exchanges, unvetted intermediaries, and arbitrage chains where accountability dilutes hop by hop.

Cybersecurity Ventures’ damage projections estimated global cybercrime costs at $10.5 trillion annually by 2025. That number needs context. It covers everything: ransom payments, remediation, downtime, incident response, and long-tail reputational damage across all cybercrime categories.

What this article covers is the ransomware economy as it actually functions – its operational logic, its 2026 trajectory, and specifically why it creates direct consequences for affiliates, media buyers, and advertisers working across programmatic and performance channels.

New to ransomware basics? CISA and Microsoft Security are good starting points. This piece picks up from there – it’s for ad industry practitioners who want to understand what the ransomware economy means for the channels they work in every day.

The Business Model Nobody Wants to Call What It Is

“Ransomware as a service” (RaaS) first appeared in threat intelligence writing around 2016, but it took nearly a decade for the model to make sense outside security circles.

By 2024–2025, the major RaaS operations look less like traditional criminal enterprises and more like franchise networks, with onboarding requirements, performance expectations, and partner structures that wouldn’t look out of place in a legitimate B2B SaaS business.

How RaaS Works

The anatomy works like this. A core developer group builds and maintains the technical infrastructure: encryption toolkits, victim payment portals, negotiation interfaces, and data leak sites used for double extortion – the tactic of threatening to publish stolen data unless payment is received, separate from or in addition to the encryption-based ransom demand.

They then recruit affiliates who handle the actual intrusion and deployment work. Across the major RaaS operations of 2023–2024 (LockBit, ALPHV/BlackCat, Play), affiliates typically retained 70–80% of ransom payments collected; the core group kept 20–30% for infrastructure and what amounts to technical support. Newer operations have pushed that split further in the affiliate’s favor — RansomHub, for example, is documented by CISA as letting affiliates retain roughly 90% and remit only 10% to the core group, a structural escalation in the competition for skilled operators.

The FBI and CISA’s joint advisories on LockBit and ALPHV/BlackCat document affiliate rule sets, tiered revenue splits, and technical onboarding infrastructure that are, structurally, not far from a vendor partner program.

Chainalysis’ annual crypto crime reporting has tracked increasing professionalization in payment timing, negotiation consistency, and infrastructure reliability across major RaaS groups, with some operations maintaining dedicated staff for victim communications.

There’s a third layer of coverage misses: access brokers. These are independent operators who don’t build or deploy ransomware – they just sell the way in. That means compromised RDP credentials, unpatched VPN vulnerabilities, and valid employee logins harvested through phishing. They sell these footholds to RaaS affiliates at a flat fee or a cut of the eventual ransom.

Access brokers are the wholesale suppliers of the ransomware supply chain, and they’re also why law enforcement takedowns tend to produce displacement rather than elimination.

When Operation Cronos dismantled LockBit’s infrastructure in February 2024, the affiliates didn’t retire. Most migrated to RansomHub, a group that launched the same month, absorbed the displaced talent, and ended 2024 as the most prolific RaaS operation by volume.

The RaaS operational stack, simplified:

|

Layer 01 · Core developers

Build & maintain the platform

Develop encryption malware, run payment portals, host leak sites, and maintain negotiation infrastructure used across affiliate operations.

Receives 20–30% of ransom payments

|

||

|

Layer 02a · Access brokers

Sell initial access

Offer compromised RDP credentials, unpatched VPNs, valid logins from phishing.

Flat fee or % cut

|

Layer 02b · RaaS affiliates

Run the intrusion

Buy access, conduct intrusions, deploy payload, exfiltrate data.

Receives 70–80% of payments

|

|

|

Layer 03 · Victims

Encryption + extortion pressure

Production systems encrypted, sensitive data exfiltrated and threatened for publication unless payment is delivered.

|

||

|

Outcome A · Ransom payment

Cash-out pipeline

Crypto wallets → mixing services → OTC brokers → fiat conversion across multiple jurisdictions.

|

Outcome B · Double extortion

Data leak pressure

Stolen data threatened for publication separately, regardless of whether the encryption ransom is paid.

|

|

What changed by 2026 isn’t that ransomware got more common. It got more segmented:

- Entry-level operators run commodity payloads against small businesses at high volume and low ransom – a numbers game.

- Mid-market groups target specific sectors, spend weeks inside a network before deploying, and research the victim’s revenue and insurance coverage to calibrate their demand.

- At the top end, groups operate more like APTs than criminals: months of reconnaissance, surgical deployment, and ransom demands in the tens of millions against enterprises and critical infrastructure.

The takeaway for anyone outside security research: ransomware in 2026 is not a technical problem with a technical solution. It’s a supply chain with suppliers, distributors, and service tiers, and like any mature industry, it has found efficient ways to reach its market. Including yours.

3 Incidents That Show How RaaS Actually Works

The mechanics of RaaS are easier to understand through what actually happened.

These 3 incidents from 2023–2024 each expose a different part of how the ransomware economy works in practice.

Change Healthcare, February 2024

ALPHV/BlackCat hit Change Healthcare, a UnitedHealth Group subsidiary that processes roughly 40% of US healthcare claims.

The entry point wasn’t sophisticated: compromised credentials on a Citrix remote access portal with no multi-factor authentication. The disruption knocked out prescription processing and insurance claims across pharmacies, hospitals, and clinics for weeks.

UnitedHealth paid approximately $22 million in ransom, then ALPHV pulled an exit scam, taking the payment and shutting down without paying their affiliate.

That affiliate, reportedly known as “Notchy,” resurfaced in RansomHub and threatened to release the data anyway.

UnitedHealth estimated $872 million in direct costs for Q1 2024 alone, with full-year impact exceeding $1.6 billion. Roughly 100 million individuals’ health records were exposed – the largest healthcare data breach in US history.

CDK Global, June 2024

CDK provides dealer management software to around 15,000 car dealerships across North America.

BlackSuit ransomware – a rebrand of the Royal group, which was itself formed by former operators of Conti, one of the most prolific ransomware operations before its 2022 collapse – took down CDK’s systems in mid-June, cutting off dealerships from inventory, sales, financing, and service scheduling simultaneously.

The outage lasted two to three weeks. CDK reportedly paid $25 million to restore operations. The downstream damage extended to manufacturers whose dealer networks couldn’t process orders — a reminder that the economic impact on third parties often exceeds the ransom itself. The Anderson Economic Group estimated collective dealer losses at over $1 billion, dwarfing the ransom payment itself.

The Nitrogen Malvertising Campaign, 2023–2024

This one matters most to anyone working in advertising, because it used paid search as its primary delivery mechanism.

Sophos and eSentire documented a sustained campaign in which threat actors bought ads on Google and Bing for searches on legitimate software – AnyDesk, WinSCP, Cisco AnyConnect, and TreeSize.

The ads led to pages that looked exactly like official download sites. They delivered a malware loader called Nitrogen, which, in multiple documented cases, led to ALPHV ransomware deployment. The malicious pages cleared ad platform review because the initial landing page was indistinguishable from a legitimate software distributor.

Detection required understanding what happened after the download, not at the creative or URL level.

This last case is worth sitting with. Nitrogen didn’t exploit obscure ad inventory. It ran in paid search – Google and Bing – one of the most scrutinized advertising environments in existence, and it worked for months.

Three groups, three very different targets. What connects them is the same underlying infrastructure: affiliate networks, access brokers, rebranded payloads, and the same economic logic. The ransomware economy doesn’t need to be sophisticated to be effective. It needs to be consistent. And increasingly, it uses the same distribution channels the advertising industry relies on every day.

Ransomware Statistics, 2023–2025: What the Numbers Actually Say

Ransomware statistics come with a built-in lag. Cryptocurrency payment data from Chainalysis is the most reliable measure, but linking wallet activity to specific incidents involves inference. Incident disclosures are timed around legal and insurance considerations, not research. With that context, here’s what the evidence shows.

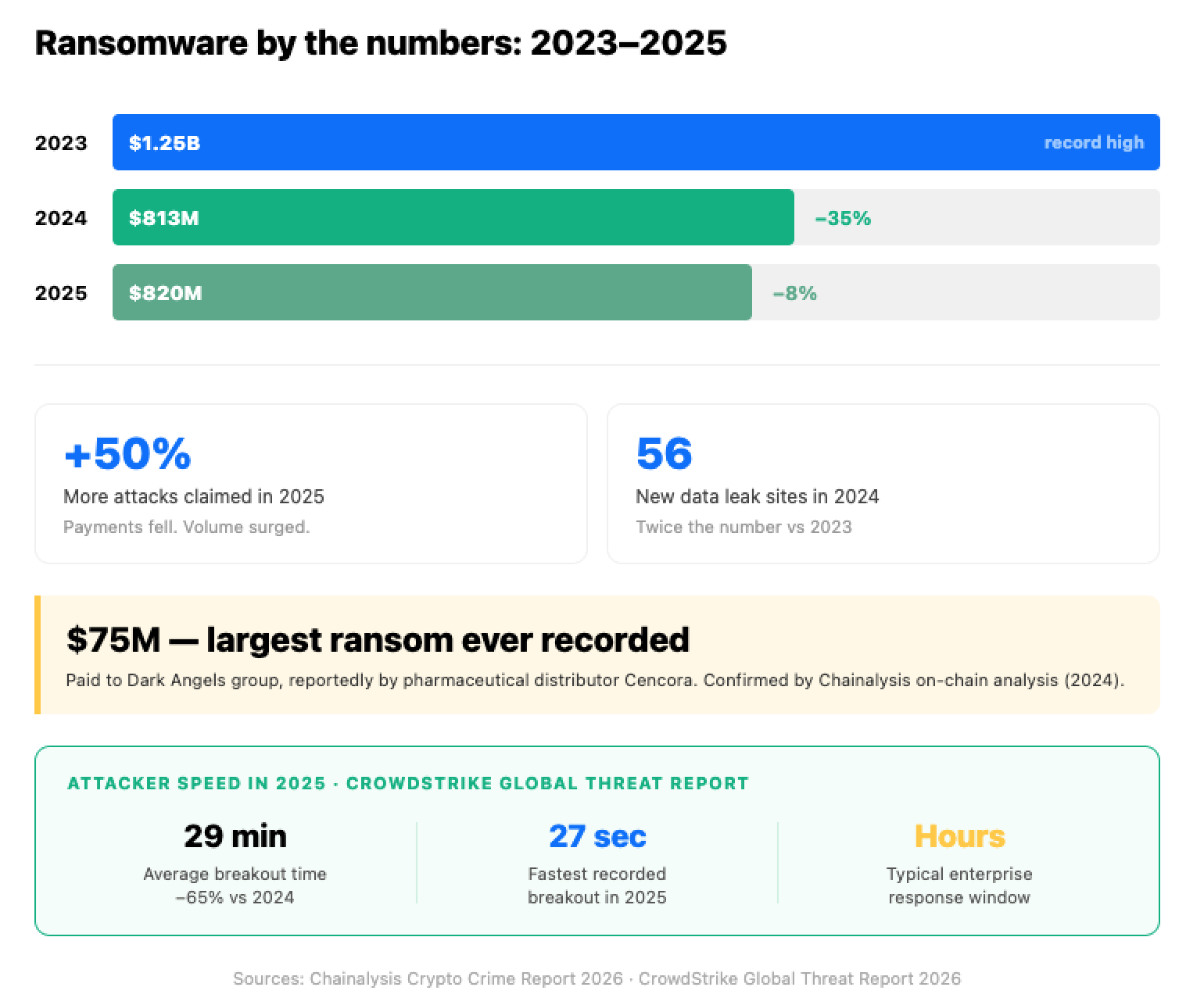

In 2023, ransomware payments broke $1 billion for the first time – a record that held for one year.

In 2024, victims initially appeared to pay attackers roughly $813 million — a 35% drop from 2023’s $1.25 billion. Chainalysis later revised the 2024 figure upward to approximately $892 million in its 2026 Crypto Crime Report as additional payments were attributed to known actors over the course of the year.

That sounds like good news, and partly it is: law enforcement disrupted LockBit and ALPHV, and more organizations refused to pay. But the number of successful attacks hit an all-time high. According to Recorded Future, 56 new data leak sites were created in 2024 – more than twice the number tracked in 2023. While there are fewer payments, we can see more attacks.

The threat didn’t shrink – it fragmented. In 2025, ransomware payments fell another 8% to roughly $820 million (calculated against the revised 2024 baseline of $892 million), even as claimed attacks rose 50%. The share of victims who paid hit an all-time low of around 28%, per Chainalysis.

One number that cuts through the trend line: the largest single ransomware payment ever recorded was approximately $75 million, paid to the Dark Angels group, reportedly by pharmaceutical distributor Cencora, confirmed by Chainalysis.

Attackers are also getting faster. According to the CrowdStrike 2026 Global Threat Report, the average eCrime breakout time – how long it takes an attacker to go from initial access to spreading through the network – dropped to 29 minutes in 2025, a 65% increase in speed from 2024.

The fastest recorded breakout was 27 seconds. Most enterprise security teams are built around a response window measured in hours. That gap is the problem.

Industries at Risk

Healthcare, manufacturing, critical infrastructure, and legal services consistently top the most-attacked categories across major threat intelligence reports. Additionally, education has grown significantly in incident volume.

These sectors share a common profile: high sensitivity of data, high cost of downtime, and historically under-resourced security teams.

One number worth clarifying before moving on: the $10.5 trillion cybercrime figure from Cybersecurity Ventures covers all crime categories and all cost types. It’s a legitimate aggregate, but it’s not a ransomware number. Treating it as one produces either panic or dismissal – neither leads to good decisions.

Three Ways Ransomware Intersects With Your Ad Campaigns

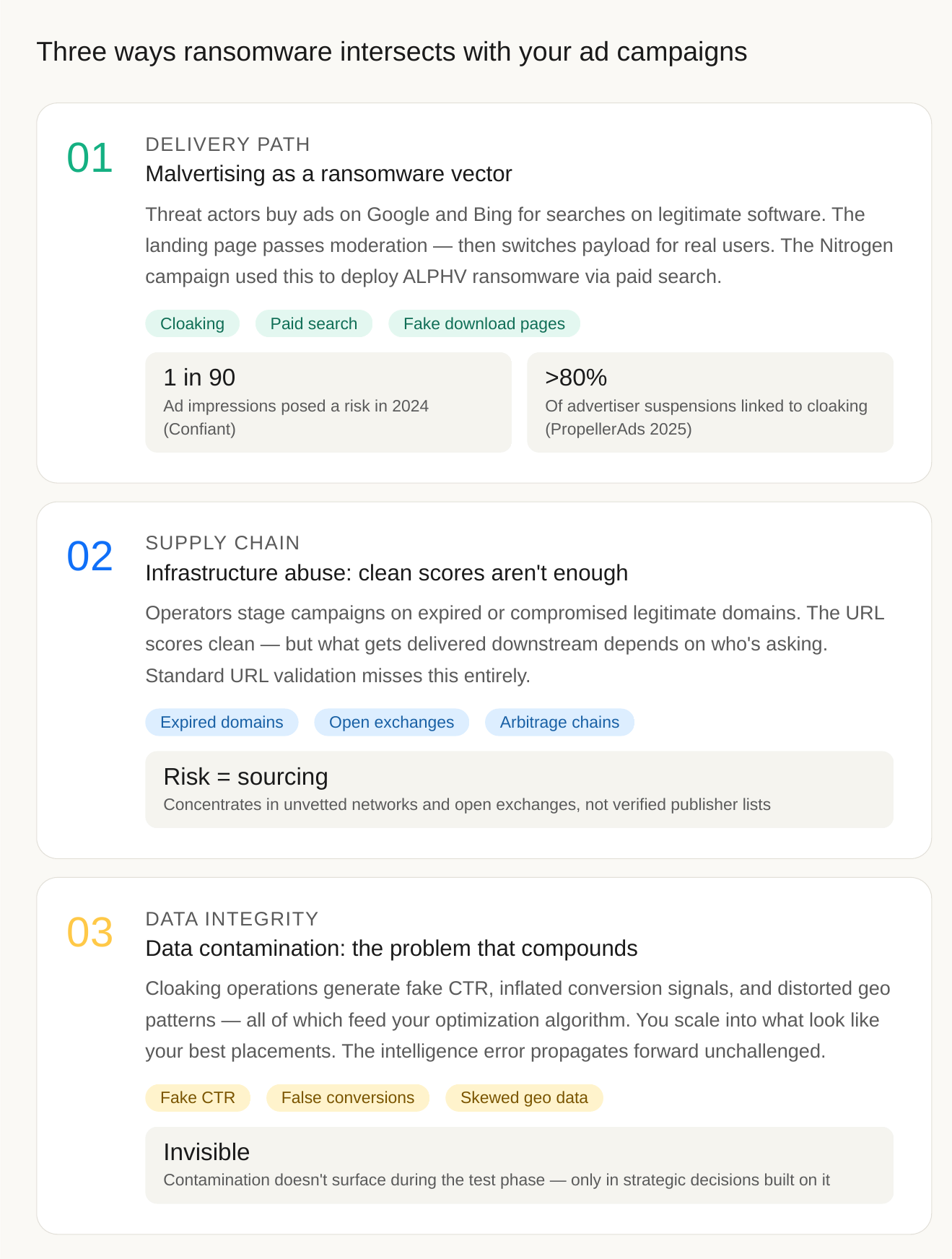

For anyone running ad campaigns at scale: traffic acquisition, affiliate channels, or the publisher side, the ransomware economy isn’t an abstract background context. It creates direct operational consequences across three distinct channels, each with its own dynamics and detection profile.

1. Malvertising as a ransomware delivery path

The Nitrogen campaign described above isn’t a one-off. It’s a documented pattern, and advertising infrastructure is a preferred delivery path: broad reach, legitimate domain associations, and moderation gaps that skilled operators know how to exploit.

Confiant’s Malvertising and Ad Quality Index, which monitors hundreds of billions of ad impressions, has documented persistent operations that use cloaking specifically to pass platform moderation before switching payloads.

The mechanism is worth understanding precisely: a creative reviewed by an automated moderator at 9 AM shows a clean software download page. The same creative served to a real user in Chicago at 2 PM shows a convincing fake landing page that initiates a malicious download. Same URL, completely different experience. Confiant reported that one in every ninety ad impressions posed a risk in 2024.

Cloaking isn’t a policy violation committed carelessly – it’s a campaign specifically engineered around detection. PropellerAds’ own moderation data shows cloaking accounting for more than 80% of confirmed advertiser suspensions in 2025.

The full breakdown of how these patterns evolved across 2022–2025 is documented in the PropellerAds Ads Safety Report 2025.

2. Infrastructure abuse: the problem with clean domain scores

Ransomware operators frequently stage campaigns on expired or compromised legitimate domains – web servers belonging to real organizations that have no idea their infrastructure is being used. For advertisers buying through programmatic channels, this creates a supply chain problem that standard tools don’t catch.

A publisher domain can look completely clean: solid history, high score in URL reputation tools, a real business behind it, and at the same time quietly route users to malicious infrastructure. The domain itself isn’t the threat. The threat is what happens after the URL gets checked: depending on who’s asking, the same address delivers different content. Reviewers and reputation scanners get the clean version. Real users get something else. Standard URL validation sees nothing wrong, because the URL really is legitimate. The only way to catch this is by watching the full chain: what actually gets delivered, all the way through, not just the entry point.

The practical implication is about sourcing, not just scanning.

Reputable ad networks actively vet publishers, monitor domain quality, and screen traffic for anomalies. The risk concentrates when advertisers buy from less scrutinized sources: open exchanges, unvetted networks, or arbitrage chains where the original traffic origin is several hops removed. A domain that scores clean in a reputation database may still carry risk if the network it sits in doesn’t continuously monitor what’s being delivered downstream.

3. Data contamination: the problem that compounds

This is the least discussed of the three, and arguably the one with the longest operational tail.

When a ransomware distribution campaign runs through advertising infrastructure, it generates fake engagement signals – inflated click-through rates on specific placements, manipulated conversion signals from bot traffic, and distorted geographic patterns. All of this feeds into the optimization data that media buyers use to make decisions.

Read more: Inside the Triada Battle: A Five-Year Investigation and the Security Upgrades It Triggered

Most buying teams focus on the placement quality problem, which is fixable, while the strategic conclusions derived from contaminated data propagate forward unchallenged.

These three vectors form one compounding chain: malvertising is the entry, infrastructure abuse is the camouflage, and data contamination is the tail that keeps distorting decisions long after the attack ends.

The real takeaway for advertisers is that ad-side ransomware risk isn’t a URL or moderation problem – it’s a sourcing problem. Wasted budget is recoverable; strategic conclusions built on contaminated test data are not.

Ad safety has shifted from creative moderation to sourcing discipline: choose networks that continuously monitor what’s delivered downstream, not just what passes intake.

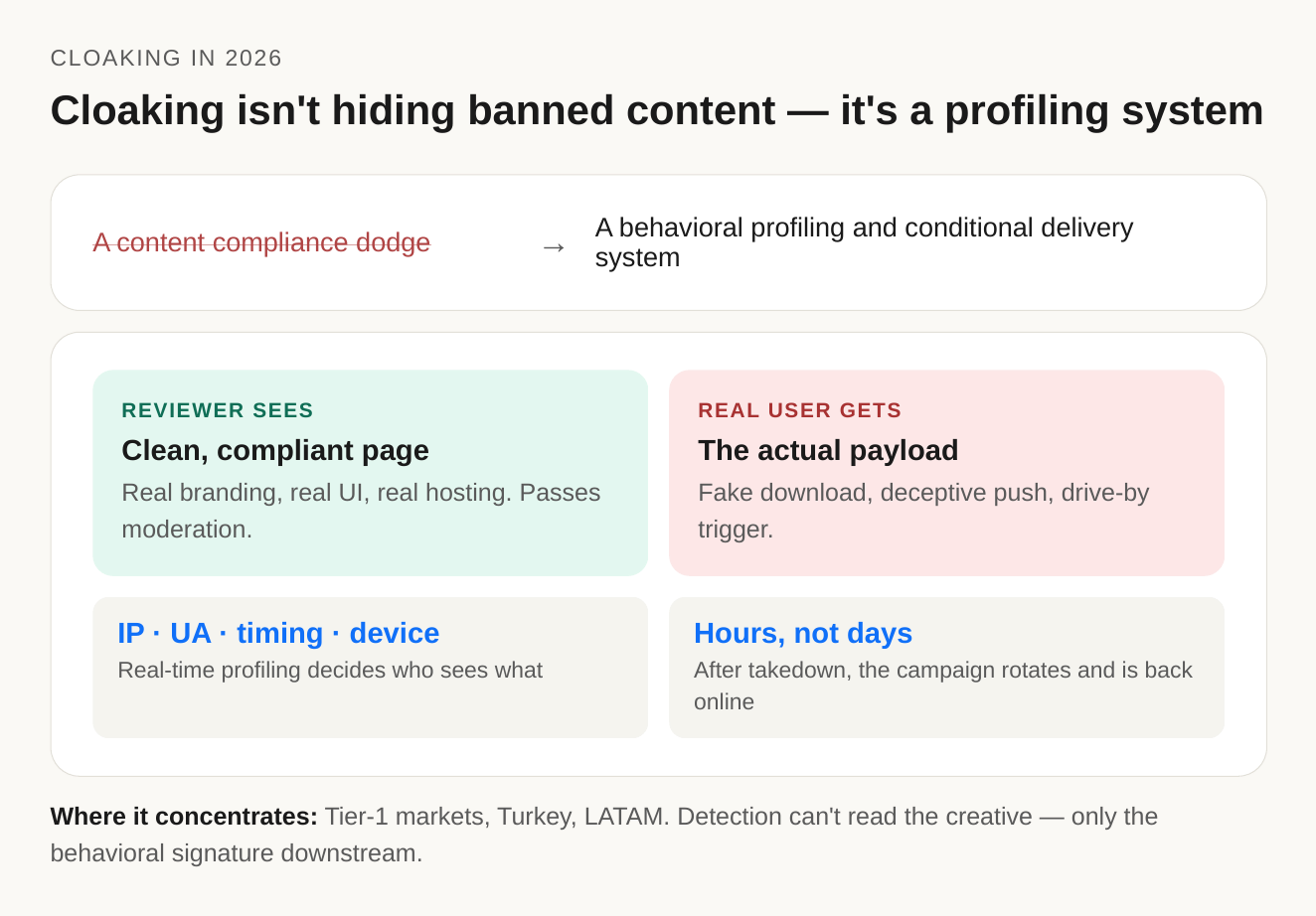

Why Cloaking Is the Preferred Delivery Architecture, Not a Side Effect

Most ad industry coverage treats cloaking as a content compliance issue – someone hiding banned material to slip past moderation. That framing is a decade out of date.

In 2026, advanced cloaking is something more deliberate: a behavioral profiling and conditional delivery system, built specifically to exploit the gap between what moderation sees and what real users actually experience.

The architecture works in layers. Inbound traffic gets profiled in real time. IP ranges separate known moderation and verification services from consumer ISPs. Browser and user-agent fingerprinting filter out automated reviewers. Timing patterns and device-level signals flag anything that looks like a sandbox. Each layer feeds a single decision: is this visitor a reviewer, a crawler, a researcher, or a real target user in a high-value GEO?

The two audiences then see two completely different things. Reviewers get a clean, compliant page. Real users get the actual payload – a fake software download, a deceptive push prompt, a drive-by trigger. The most resilient setups spread delivery across multiple hosts and CDNs, so any single takedown removes one node while the operation rotates servers and rule sets. Within hours, the campaign is back online in a new configuration.

This is exactly what the Nitrogen campaign demonstrated. As Sophos X-Ops documented, the operation worked even inside paid search – one of the most heavily moderated environments in digital advertising. Creative, landing page, and domain all looked legitimate because they were engineered to: real software branding, real UI, real hosting infrastructure. The malicious behavior was conditional, post-click, post-review, and only triggered for the right user profile.

That’s why detection can’t focus on the creative: the creative almost never carries the malicious content.

Instead, it has to focus on behavioral signatures: JavaScript that executes differently for automated visitors, redirect chains that are inconsistently deep relative to a clean-looking page, load patterns that shift with the user agent, and mismatches between what a campaign declares and what it actually delivers downstream.

According to the PropellerAds Ads Safety Report 2025, cloaking-linked suspicious patterns currently concentrate in Tier-1 markets, Turkey, and LATAM. The economic logic tracks: high-value users make Tier-1 worth the infrastructure investment, while less-monitored regions offer cheaper operational surface for lower-sophistication campaigns.

Traditional Malvertising vs. Infrastructure-Heavy Cloaking in 2025

| Dimension | Traditional Malvertising | Infrastructure-Heavy Cloaking (2025) |

|---|---|---|

| Infrastructure complexity | Single redirect URL or known bad domain | Multi-layer routing across distributed hosting, multiple CDN nodes |

| What moderation actually sees | Malicious URL present in the creative or landing page | Clean URL; malicious content conditionally delivered post-approval to target users only |

| Evasion mechanism | None, or simple IP/user-agent filter | Behavioral fingerprinting classifying reviewer vs. target user before content decision |

| Set-up time and cost | Hours; low cost | Days to weeks; significant infrastructure investment |

| Response to enforcement | Campaign ends with takedown | Operation rotates to alternate nodes; infrastructure reconstitutes within hours |

| Signal quality for detection | High — many obvious anomalies, clear behavioral signatures, and malicious URLs detectable | Low — minimal pre-approval signals, high false-positive risk at low volume, requires post-approval behavioral monitoring at scale |

| Detection implication | URL reputation checks, creative scanning, and known-bad-domain blocklists effective | Requires behavioral post-approval monitoring, fingerprinting the fingerprinter, cross-campaign behavioral correlation at scale — none of which can be done reliably at pre-bid |

The takeaway isn’t that detection got harder – it’s that the layer where it happens has shifted, and ransomware operators were among the first to exploit that shift. Traditional malvertising lived in the creative or the URL, where pre-approval tooling could see it. Infrastructure-heavy cloaking moves the malicious behavior past review entirely, into the post-approval delivery chain.

Reputable ad networks understand this and have moved their defenses accordingly, toward continuous behavioral monitoring, supply-path analysis, and feedback loops between human review and automated detection. That’s the work the next section is about.

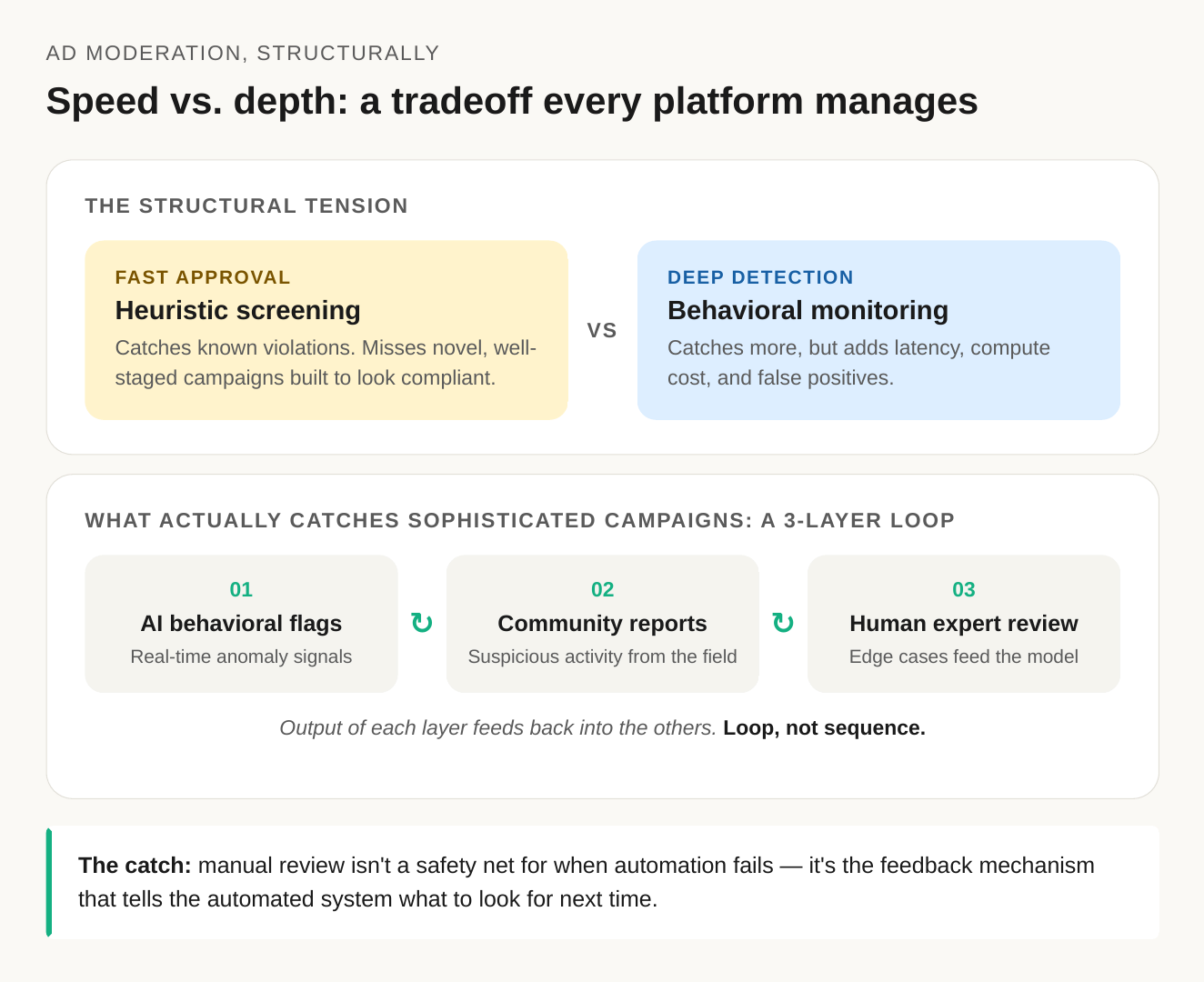

The Speed–Security Tradeoff: How Mature Platforms Manage What No Single Layer Eliminates

Ad moderation runs into a structural tension worth stating plainly: the pressure to approve campaigns fast and the work needed to catch sophisticated evasion pull in opposite directions. Every reputable platform deals with this tradeoff, and the mature ones are open about it. Pretending it can be fully solved leads to architectures that look good on paper but don’t hold up against how adversarial systems actually evolve.

The pressure to approve fast is legitimate. Advertisers expect decisions in minutes. Slow review hurts honest businesses far more than it slows down bad actors. The screening layer that makes fast approval possible: known-bad domain lists, prohibited content categories, obvious creative violations – does its job well. It catches most of what it’s designed to catch.

What it can’t catch, by definition, are novel, well-staged campaigns built specifically to look compliant at review time.

Closing that remaining gap with deeper behavioral monitoring and infrastructure analysis costs latency, compute, and a real false-positive rate that creates friction for legitimate advertisers.

Machine learning detection works under the same limit. Models are only as current as their training data. A campaign using techniques the model hasn’t seen at volume will pass initial review. Detection improves once enough flagged cases pile up to make the pattern visible, but that improvement comes after the first wave of those campaigns has already run. This cold-start window is the gap sophisticated actors design around, and it’s why first-generation cloaking architectures consistently see the highest success rates.

What actually closes the loop is three layers working as one system:

- Behavioral signals from the automated layer

- Reports from publishers and the wider community

- Human expert review of flagged edge cases

The third layer tends to be undervalued. Human review of ambiguous campaigns isn’t a fallback for when automation fails – it’s the feedback that teaches the automated system what to look for next. When manual review feeds the model, detection compounds over time. When it’s treated as a separate appeals queue, the automated layer stays a generation behind whatever’s running in market.

This is the principle PropellerAds’ traffic quality framework is built on: source vetting, real-time behavioral analysis, and manual review of flagged activity work as a single feedback loop, not a sequence of independent gates. The architecture and current performance benchmarks are documented on the PropellerAds Traffic Quality page.

What Media Buyers Should Actually Do

For media buyers, the ransomware economy isn’t an abstract security topic – it’s a set of risks that concentrate in specific parts of the ad ecosystem. Malvertising needs an advertising delivery layer to operate. Cloaking-driven campaigns need environments where post-approval behavior isn’t continuously monitored. Contaminated optimization signals appear wherever traffic quality controls are thin.

The common thread is sourcing: where in the ecosystem you choose to buy, and how rigorously that part of it is vetted. That’s a buying decision before it’s a security one: which is why the recommendations below sit at the buying layer, not in InfoSec’s lane.

- Audit supply chain depth, not just vendor certifications. Industry frameworks like IAB Tech Lab’s sellers.json and the IAB Europe Guide to Supply Path Optimisation exist precisely so buyers can see who actually sits between them and the publisher.

- Treat suspiciously uniform traffic as a signal worth investigating. Targeting narrows your audience; it doesn’t make their behavior uniform. Within a targeted GEO, you should still see regional variance: Alaska and New York don’t behave identically.

Within a device segment, browser and OS distribution should reflect real-world fragmentation. When a set of placements shows engagement that’s unusually flat across dimensions where human behavior is naturally uneven, or conversion rates that uniformly beat every benchmark with no obvious explanation, look at the supply path directly. It might just be a well-optimized segment, but artificial positive signals look identical to exceptional performance until you trace the source.

- Run anomaly detection on your own data, independently. Your analytics layer sees things platform-level monitoring can’t, particularly downstream: conversion quality, refund rates, customer lifetime value, etc. If your highest-CTR placements produce the weakest downstream engagement, that mismatch is more informative than either metric alone.

- Choose partners certified against the right things. The TAG Certified Against Fraud Program, run by the Trustworthy Accountability Group, audits platforms against documented anti-fraud and anti-malvertising standards. TAG’s own benchmark data shows that buying through certified companies dramatically reduces invalid traffic exposure. Certifications aren’t a guarantee, but their absence, particularly when paired with vague answers about post-approval behavioral monitoring, is a signal worth weighing.

How to Evaluate an Ad Platform’s Security Posture: What Good Answers Actually Look Like

These four criteria aren’t a full security audit – they’re the minimum first-pass questions a media buyer should be able to get clear answers to before committing significant budget.

1. Certifications and independent audits

External standards like ISO/IEC 27001 and ISAE 3000 matter because they represent independent review of a platform’s security controls: not self-assessments or internal audit reports.

2. Transparency about enforcement data

Aggregate rejection numbers are easy to publish. The useful question is why campaigns get suspended and how that pattern moves over time. A platform that can tell you cloaking-related suspensions are a specific and growing share of enforcement is distinguishing a structurally different threat (infrastructure-heavy evasion) from ordinary policy violations.

Trend direction across multiple reporting periods matters more than any single quarter, it shows whether detection is keeping pace with adversarial pressure. A good answer breaks suspensions down by category, shows movement over time, and separates sophisticated evasion from simple violations.

3. Post-approval behavioral monitoring

Pre-approval filtering catches known patterns. It misses new ones; by design, fresh evasion is built to look clean at review.

So the question isn’t “do you have AI-based fraud detection?” – everyone claims that. It’s: “When you catch a campaign after it passed review, does that case update detection for next time?”

A good answer describes a real feedback loop. A weaker one treats AI as a finished tool, not something that keeps learning.

4. Whether human review actually feeds back into detection

You probably won’t get a candid answer if you ask, and even a polished one would be hard to verify.

The observable signal is whether enforcement actually evolves over time: rejection rules that get updated, suspension categories that shift between reporting periods, visible response to new evasion patterns.

What the Next Phase Looks Like

The ransomware economy in its current form won’t be disrupted by any single enforcement action, legislative push, or technical countermeasure. The LockBit takedown made this clear at a structural level: dismantling core infrastructure pushes affiliates into competing operations rather than out of the business.

RansomHub absorbed the displaced capacity within months, and ended 2024 as the most prolific RaaS group by volume. The expertise didn’t disappear, and the incentives didn’t change. The access broker market that supplies both is still running.

For advertising professionals, three trends are worth tracking specifically.

- Generative AI in reconnaissance and phishing. By 2026, LLMs are being used at scale to produce personalized spear-phishing content: emails that name specific employees, reference real job titles, and mimic the communication style of legitimate organizational senders.

The Scattered Spider group’s social engineering playbook, calling IT helpdesks and impersonating employees to reset credentials, is the human-driven precursor to what becomes possible when identity fabrication is automated.

For the advertising industry specifically, this lands as a scale problem in identity verification, not a capability one.

Modern KYC systems can already detect most AI-generated documents and deepfake submissions on their own. What’s changing is volume: AI lets fraudsters generate thousands of synthetic-identity variations and run them in parallel across multiple platforms, hunting for the weakest onboarding flow.

As Sumsub‘s Julia Andreeva explains, “The real shift isn’t that AI suddenly makes verification ineffective – it’s that AI allows fraudsters to industrialize their attempts, which means platforms need layered defenses that look at identity, behavior, and account relationships together.”

That layered approach: document and biometric verification combined with device telemetry, behavioral signals, and network-level cluster detection, is what PropellerAds runs in cooperation with Sumsub.

- Pure data extortion replacing encryption. Several active groups have stopped encrypting victim systems entirely. They steal data and threaten to publish it instead – faster, quieter, and no decryption-key infrastructure to maintain. Meaning they have the same leverage, but significantly less risk.

In case of advertising, this changes which kind of channels these operators gravitate toward. They no longer need to deliver malware to a victim’s machine, just a reliable way to reach the right person. That concentrates the risk in environments with weak advertiser verification and minimal post-approval monitoring.

Channels with layered controls: identity verification, traffic-quality screening, ongoing behavioral analysis, are a poor fit for this kind of operation, and the economics push these groups elsewhere.

- Infrastructure hijacking at scale. Compromising legitimate domains and hosting infrastructure isn’t new, what’s changing is that the scanning and compromise of that infrastructure is increasingly automated.

For advertisers, this isn’t typically a buying-side problem to solve. In network models, individual placements aren’t visible to the buyer; the responsibility for catching compromised infrastructure sits with the platform. Closing the gap requires continuous monitoring of publisher hosting and delivery behavior after onboarding – not a one-time check at intake.

What This Actually Means

The ransomware economy operates as a global industry for the same reason any mature industry does: the incentives that sustain it haven’t been disrupted.

Enforcement actions move operations from one group to the next – LockBit fell, RansomHub absorbed the capacity within months, but the access broker market, the affiliate revenue model, and the infrastructure layers that supply both are too distributed to be eliminated by hitting any single node.

The ransomware economy isn’t a problem about to be solved. It’s a structural feature of the threat landscape that the advertising industry now has to operate alongside.

For advertising professionals, the reframe that matters most is this: ransomware isn’t only something that happens to other industries and occasionally affects their ad spend. The advertising ecosystem is part of how it operates. It uses ad delivery as a vector for malware. It abuses legitimate but compromised infrastructure to bypass standard URL checks. And it contaminates the data buyers rely on for optimization, turning successful test phases into misleading guides for scale.

Operating well in this environment doesn’t mean treating every platform as a threat – it means choosing the right defensive posture.

- Choose partners that monitor publisher infrastructure and traffic behavior continuously, not just at intake.

- Build independent anomaly detection into your own analytics so platform-level signals aren’t your only source of truth.

- Treat statistically perfect traffic as a question worth asking, not a result worth scaling.

And accept the baseline reality: no single security layer catches everything. The most credible platforms aren’t the ones that promise zero exposure – they’re the ones that describe clearly what they monitor, what they catch, and what no single layer can, and design their architecture around that honest assessment.

Join our Telegram for more insights and share your ideas with fellow-affiliates!

Editorial disclosure: PropellerAds operates a digital advertising platform. Internal enforcement data referenced in this article reflects PropellerAds’ own moderation and policy records, labeled explicitly throughout. External statistics are sourced from named third-party research organizations; all external sources are linked inline at the point of citation. This article is produced by PropellerAds for practitioners in the advertising industry and should not be treated as independent academic or threat intelligence research.